DATA AS A RESOURCE

Data inevitably has a cost, one that organisations struggle with to contain and control despite their best endeavours, and that is one of the problems, it is seen as a cost first, a resource second, if even that.

By re-orienting towards leveraging data as a business resource, organisations gain 2 tangible benefits:

1.Data consumption and the business requirements for data will align, meaning providing greater data efficiency which in itself with assist in controlling costs, &

2.Better data management, through the creation of known data inventories, and data hierarchies which grades data in terms of requirement, purpose and importantly source. This is the foundation of data cost management.

ASK THE RIGHT QUESTIONS

The keys lie in asking the right questions in terms of data consumption trends, accessibility, and sourcing.

1.What are my data requirements, current, and projected?

2.What trends are driving changes to my requirements?

3.Why are these factors causing my data requirements to change?

4.How much control do I have over these factors, external and internal?

5.What are the best strategies to structure and manage my data requirements?

KYS – KNOW YOUR SOURCE

Fundamental to data needs, and misunderstood. It is not enough to have vague trust in the source. institutions must have confidence in knowing actual data lineage and provenance, a requirement driven initially by regulators, but now understood by the data consumers to be very relevant in their day to day business activities.

What all this means is that financial institutions, indeed any organisation, managing data, must start to comprehend data flows. Until this happens data will be seen as a cost, and that cost will only increase. This paper takes a brief look at the trends and issues that market participants and data consumers are facing in controlling their data expenditure.

PREDICTED EXPENDITURE TRENDS

Predicting future data expenditure is not hard, it is only going to increase, what is harder is predicting in what sectors where are costs going to increase and who is willing to pay. Below are 10 trends which are happening right now, though the balance between them is going to vary depending upon upcoming economic uncertainties which could provide alternative drivers of demand.

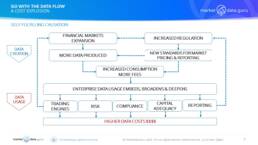

SELF FULFILLING CAUSATION

According to DOMO’s 2018 report, ‘Over 2.5 quintillion bytes of data are created every single day, and it’s only going to grow from there. By 2020, it’s estimated that 1.7MB of data will be created every second for every person on earth.’

Data creates data, it is self-fulfilling, and for none more so than financial institutions, yet other businesses are catching up fast, especially those reliant upon intellectual property rights, like pharmaceuticals, aerospace, and entertainment.

It is all driven by the fact the same item of data can be used simultaneously for an infinite amount of usage, by an infinite number of companies, i.e. ‘The Snicker’s Effect’

Then it all has to be stored somewhere……………………………………….

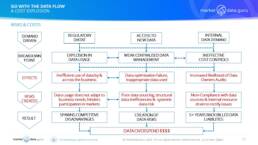

RISKS & COSTS

How does the lack of effective market management become a risk and increased cost to the business?

There are multiple factors, external and internal drive breakdowns, but often natural entropy, plus a lack of adaption leading to internal inertia. The weak link in the chain is often the human one, either through attempted positive engagement through tinkering without clearly defining the wanted outcomes, or negative engagement due to people not fulfilling their roles properly.

SUMMARY

Many data source owners believe that just because they have data, they can ‘sell’ it, this is a false assumption, likewise as a consumer many banks do not associate usage with utility. A British domestic equities trader is unlikely to need Australian energy prices. Banks try and work around this by creating ‘Gold Hierarchies’ of preferred sources and data for specific asset classes and markets, and/or creating user profiles. This is a big challenge as more data sources come onstream and vendor disintermediation occurs, potentially leading to more costs.

In addition, restrictive multi-year vendor contracts with unfriendly rollover terms makes it hard for data managers to plan ahead effectively, resulting in unnecessary costs. This means cost control strategies require constant validation, and a three level approach remembering that what is ‘Must Have Data’ to one user is ‘Luxury Data’ to another.

To ensure the business continuously gets the data it needs to function effectively, data management and cost control is vital, and process is king. Market dynamics, and external influences, change the types and sources of data the consumer wants, so it is up to the business data strategists to plan ahead by identifying what future requirements are likely, and what the

This is not an unachievable objective, it requires trust in the process, internal and external communication, research, and data leadership.

CONTACT

Keiren Harris 01 December 2022

Please contact info@marketdata.guru for a pdf copy of the article

For information on our consulting services please email knharris@datacompliancellc.com